We have been bombarded with the media lately and news of infanticide, socialism, women’s rights (or what is masquerading as such) and forced medical treatments.

I am here to tell you all that the God that made us did so in his perfect image. While we do have sin in the world, there is nowhere in the Bible that God tells us to rely on the world instead of Him. Nowhere that He says we should stray from His teachings.

Our religious freedoms are under attack daily in the media and among our lawmakers. If we stick our heads in the sand, we will end up with a mouth full of sand and a nation under the rule of the liberal left. There has got to be action on our parts. That is what the parable of the Good Samaritan in Luke 10:25-37 is about. We are not only learning who our neighbors are but that we are called to action. Tim Keller writes prolifically on this in his book: Ministries of Mercy.

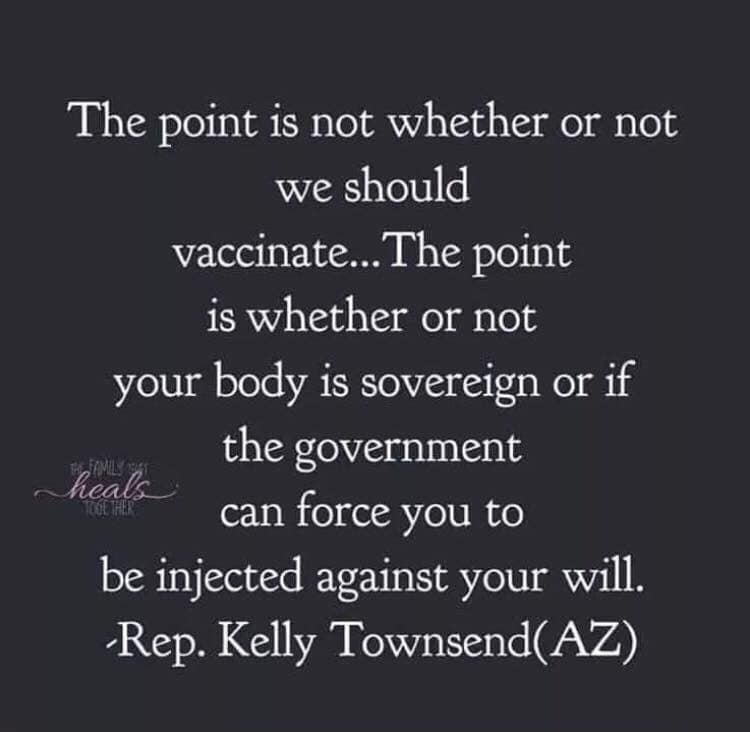

I am also here to tell you that your body is sovereign from the government. That you are not owned by them, nor should you lay your trust or empowerment in them. You answer to the one true God of Abraham, Isaac, and Jacob. You believe in the Savior, Jesus Christ. You know that this earth is passing and all things with it, but that you have heaven to look forward to. I am not saying you should sit idly back and eat what you want or destroy the earth that your food is grown in. In fact, I am saying you need to start looking more closely at all of these things. I am saying that God gaves us the laws of Leviticus on eating and taking care of the earth so that we might have good health. That we would come to rely on Him and Him only for all things that trouble us and trouble our neighbors.

Therefore, I state outright, do not give to others what is not theirs to take. I know that we have become a nation that relies on doctors and mass produced food and we accept that all as the standard and that it is acceptable. But when we have babies that are getting cancers, seizures, and countless other diseases, we have to question this standard and go back to what our God has told us and has carefully laid out for us to follow. It certainly wasn’t to throw our hands up and say, things like, “Well, my doctor is a Christian, so he must be right.” Oh, my dear brothers and sisters in Christ, those doctors, even the Christian ones are sinners just like you and me. And sin doesn’t always mean malicious intent. Sin is literally our separation from God. And that separation looks like many things, like even believing in a system that has its misguidances and corruptions. If we peeled the onion layers we would see that since the people involved in the medical system are not all Christians, there must be sin going on–and even if they were all Christians, there would be sin going on. From what they were taught in med school to what is being practiced in the field.

So I conclude with stating: before you throw your hands up and believe the media hype and fear mongering, before you divide and classify people based on your own biases, make sure of one thing: is it in Scripture? Is it Biblically sound? Does what you are thinking/saying come from the point of the Gospel?

Comment Form